Volume Viewer

In this project, we implemented a system to display and visualize volume datasets , such as those acquired from Magnetic Resonance Imaging or CT scans. In these datasets, the density 3d cube is uniformly sampled in space, resulting in a grid of voxels, which can then be used to render the underlying model. Different materials will have different densities, a fact that allows the rendering system to vary the display based on the material; for example, air has a very low density, while flesh has high density, though not as high as bone.

Display Techniques

In traditional 3d computer graphics, objects are represented by their boundary, rather than their volume. The typical case is to store a mesh of triangles, and then render the triangles to the screen. This is useful for most types of objects, which will be seen only from the outside as they have no meaningful interior, and graphics hardware has been designed with boundary representations in mind. A volume representation, however stores information about the entire contents of the object. This poses challenges with regard to visulaization. Most importantly, how is the image information from the volume rendered to the screen, and how are the features of interest isolated from the surrounding volume and displayed to the user? We discuss the first issue in this section, and the latter in the next.

When displaying the volume to the screen, we would like the rendering algorithm to be fast enough to allow for interactive manipulation. One fast method is through the use of textured polygons . We draw a series of quads that "slice" through the volume, and by using appropriate texture coordinates, we are able to render those quads with the volume data that they pass through. Traditionally, graphics cards support two dimensional textures. In this case, a standard 2d image is submitted to the card as a texture. Each vertex of a polygon to be drawn is assigned a texture coordinate, which is a value from 0 to 1 that indicates the mapping from that vertex into the texture coordinate system. These coordinates are interpolated as the polygon is rasterized, and used to selecte the appropriate pixels from the texture.

A recent addition to graphcis cards is the use of 3d texturing, in which a complete texture volume is downloaded to the card, and 3d texture coordinates are used instead of 2d coordinates to lookup into the volume. This is more natural for volume rendering, however, it tends to be implemented less efficiently. In our system we implemented volume rendering with both 2d and 3d texturing, and will discuss the implementation of both methods.

2D Object-Aligned Rendering

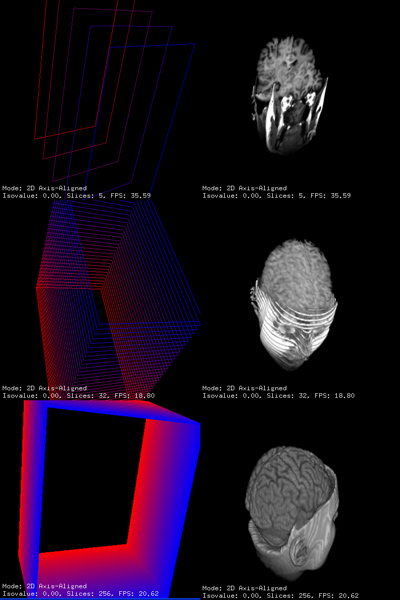

Consumer graphics hardware has long supported 2D textures, so we implemented a

2D texture render mode. This works by rendering a stack of texture mapped quads

almost perpendicular to the view direction with each texture containing an

axis-aligned slice of the 3D data. As the view direction changes, the size and

direction of the rendered slices change so that they are rendered along the

axis most parallel to the view direction.

Consumer graphics hardware has long supported 2D textures, so we implemented a

2D texture render mode. This works by rendering a stack of texture mapped quads

almost perpendicular to the view direction with each texture containing an

axis-aligned slice of the 3D data. As the view direction changes, the size and

direction of the rendered slices change so that they are rendered along the

axis most parallel to the view direction.

This axis is selected by transforming the 3 principal axes with the view rotation matrix. Then, the vector of each transformed axis is dotted with the view vector (0, 0, -1). The axis with the largest dot product is taken as the axis most parallel with the view direction.

Because each axis direction uses a different (perpendicular) view of the 3D data, 3 separate sets of textures must be used. This, the memory requirements for the 3 stacks of 2D textures are at least 3 times that of a single 3D texture.

The brain model shown here has 84 256x256 slices of data. The aspect ratio is 1:1:2, so that the spacing between each slice is twice the resolution within the slice. To improve viewing quality, 2D interpolated slices are inserted between each of the 84 slices so that all planes are drawn with equal spacing. This is necessary to avoid shifts in brightness as the drawing axis changes. Slices are interpolated by using graphics hardware multitexturing to interpolate between neighboring textures with fractional weights (0.5 in this case). The result is bilinear filtering along each slice and linear filtering between interpolated slices, generating results on par with the trilinear filtering used in the 3D texturing mode.

3D Object-Aligned Rendering

Unlike 2d slices, which require the overhead of a texture stack along each

axis, tripling the size of the data, 3d texturing allows the volume to be

submitted once, in its entirety, to the graphics card. To implement

Object-aligned rendering, we select the view-axis closest to the user, as

described previously, and then draw the slices along the axis. For each vertex

of each slice, we set the coordinate both in the object space, and the

equivalent coordinate in the texture space. Again, to ensure proper blending,

the slices are drawn from back to front. 3d texturing is a very simple and very

direct method for rendering volumes.

Unlike 2d slices, which require the overhead of a texture stack along each

axis, tripling the size of the data, 3d texturing allows the volume to be

submitted once, in its entirety, to the graphics card. To implement

Object-aligned rendering, we select the view-axis closest to the user, as

described previously, and then draw the slices along the axis. For each vertex

of each slice, we set the coordinate both in the object space, and the

equivalent coordinate in the texture space. Again, to ensure proper blending,

the slices are drawn from back to front. 3d texturing is a very simple and very

direct method for rendering volumes.

One additional feature of 3d texturing over simple 2d texturing, is that

interpolation of intermediate slices happens without any additional code. If

512 slices are drawn for a 256 slice volume, the additional slices will be

contain the trilinearly interpolated voxel values. As was pointed out, this can

also be acheived with 2d texturing using specialized texturing modes, but was

more complicated. Unfortunently all this simplicity in coding comes with a cost

at runtime. In our experimental results, the 3d texturing code was

significantly slower than the 2d code. We saw the Brain dataset rendered with

256 slices at 50 frames per second on the 2d texturing code, compared to about

15 fps on the 3d code. Still, we expect that in the future, the performance of

3d texturing will improve.

3D View-Aligned Rendering

Hardware support for 3d textures also allows use of view-aligned slices, as

opposed to object-aligned slices. The slices are always drawn parallel to the

viewing plane, eliminating the popping when moving from different axes as seen

in the object aligned texturing. In our system, this is implemented by keeping

the modelview matrix as the identity, drawing slices aligned with the user's

view, and using the texture matrix to perform rotation of the volume.

Hardware support for 3d textures also allows use of view-aligned slices, as

opposed to object-aligned slices. The slices are always drawn parallel to the

viewing plane, eliminating the popping when moving from different axes as seen

in the object aligned texturing. In our system, this is implemented by keeping

the modelview matrix as the identity, drawing slices aligned with the user's

view, and using the texture matrix to perform rotation of the volume.

We noticed experimentally, however, that view-aligned slicing tends to look much worse than object-aligned slicing. While the object-aligned slicing has popping when transitioning from one axis to another, the view-aligned slicing has slicing artifacts that change from frame to frame (though at least they move smoothly, and do not pop). We feel that the occasional artifact in the object-aligned slicing is better than the constantly changing artifacts in the view-aligned slicing, and as a result we generally use object-aligned slicing.

Identifying Features

Most volumes have a single scalar value per voxel. This scalar is converted to a 3-valued RGB pixel though the use of a transfer function. Additionally, in many cases we will also assign an opacity, referred to the alpha value of the particular voxel. The simplest transfer function will map the scalar directly to the RGBA components. This will result in a grey image that is darker in dense areas, and will be almost completely transparent in the areas with very low density, such as air. This allows the exterior of the object to be seen easily. This is used as a default transfer function in our system.

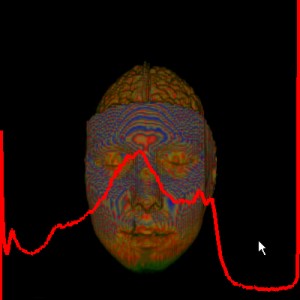

Often, however, this does not adequatly distinguish between nearby values. Also, if areas of interest with low density, such as brain tissue, are inside high density areas, including bones and skin, the low density areas will be occluded. This leads us to user-specified transfer functions, in which a the user will specify the mapping from voxel to RGBA values, often by selecting a series of line segments representing the function values. In many cases, this will be assisted by the use of a histogram (shown to the right) that shows the relative frequency of certain densities. The user can then determine which peaks in the histogram correspond to materials in the volume, and color them appropriatly.

In our system, we can

import transfer functions that have been exported from a tool written by

Diego Nehab. These are then used to assign colors to voxel values

through the use of OpenGL paletted textures

. This extension allows a texture to be specified as a combination of a color

palette, which contains usually 256 colors entries in full 32-bit RGBA form,

and a list of color indices, for which we use the data in our voxel grid. The

graphics card then only stores 8 bits per voxel, plus the 1024 byte color

palette, rather than the 32 bits per voxel that would be required for an RGBA

volume. The mapping from color index to RGBA color value is done on the fly

when the voxels are rasterized.

In our system, we can

import transfer functions that have been exported from a tool written by

Diego Nehab. These are then used to assign colors to voxel values

through the use of OpenGL paletted textures

. This extension allows a texture to be specified as a combination of a color

palette, which contains usually 256 colors entries in full 32-bit RGBA form,

and a list of color indices, for which we use the data in our voxel grid. The

graphics card then only stores 8 bits per voxel, plus the 1024 byte color

palette, rather than the 32 bits per voxel that would be required for an RGBA

volume. The mapping from color index to RGBA color value is done on the fly

when the voxels are rasterized.

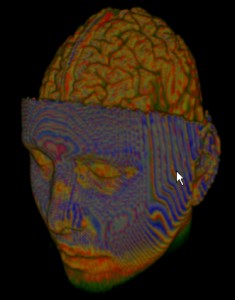

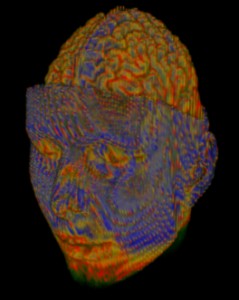

Also, others have shown through experimental results that artifacts can occur when both color and opacity are interpolated simultaneously. Instead, it is suggested to pre-multiply the colors values by the corresponding alpha value, resulting an an associated color . The associated colors are then used in the final rendering. As can be seen in the following images, the image using associated colors appears to have better results. Note especially how the features of the eyes are much better defined using associated colors.

A linear transfer function generator can also be used to generate a transfer

function with two linear ramps controlling the opacity and color of the volume.

This function of four variables (a, b, c, d) in the range [0, 1] returns 0 from

[0, a), a linear increase to a peak amplitude from [a, b), the peak amplitude

from [b, c), a linear decrease from [c, d), and 0 from [d, 1].

Viewing Cross-Sections

As we have pointed out, one of the strengths of volume rendering is that the volume keeps information regarding the interior of the model. In our system, we are easily able to generate cross sections, and see inside the interior of the model. This can be very useful, for example, in viewing the interior of a head, and clearly seeing the different passages and cavities, as well as the structure of the brain.

We are able to create cross sections through the use of OpenGL clipping planes . We store 6 planes, one for each side of a box that surrounds the object. Using keyboard control, we are able to shrink the dimensions of the box. All voxels outside the box will be discarded, allowing the user to view inside. Additionally, the box can be rotated independently, allowing for cross-sections along arbitrary planes.

One interesting feature implemented by our system is automatic rotation , which nicely demonstrates the use of clipping planes. Using the trackball interface, the user is able to rotate the volume on the screen. By pressing the space bar, the system will continue the last rotation indicated by the user. However, when the model is auto-rotating, the clipping planes stay fixed, and as the volume rotates through the clipping planes, will view different cross sections as the planes pass through the volume.

Downloads

Source code and executable (win32) - download

Brain volume data - download

Controls

The program should be run as follows:volview <volfile>The following keyboard controls are available:

- 'm' to switch between 2D and 3D texture modes

- ',' to switch between axis-aligned and view-aligned slices in 3D texture mode

- 'c' to enable clipping mode

- SPACE to enter auto-rotate mode

- 'v' to toggle viewing of the clipping cube in clipping mode

- 'V' to toggle clipping cube rotate mode in clipping mode

- 'h' to toggle histogram display

- 'H' to toggle axes display

- 'd' to toggle wireframe display in 2D texture mode

- 'p' to toggle Maximum Intensity Projection display mode

- 'l' to generate a linear transfer function with variables w, W, e, and E

- '+' and '-' to adjust the currently selected variable some constant amount

- '?' to prompt for a value for the currently selected variable

- 'a' to select the alpha cutoff variable (default = 0, range = [0, 1])

- 'x', 'X', 'y', 'Y', 'z', and 'Z' to select the clipping extent variables for positive and negative x, y, and z axes (default = 1, range = [0, 1]

- 's' to select the scale variable (default = 1, range = [0.1, 10.0]

- 'r' to select the slice resolution variable (default = 256, range = [1, 1024]

- 'w', 'W', 'e', and 'E' to select the variables for the linear transfer function generator (range = [0, 1])

- 'P' to select the peak variable for the linear transfer function generator